Architecture

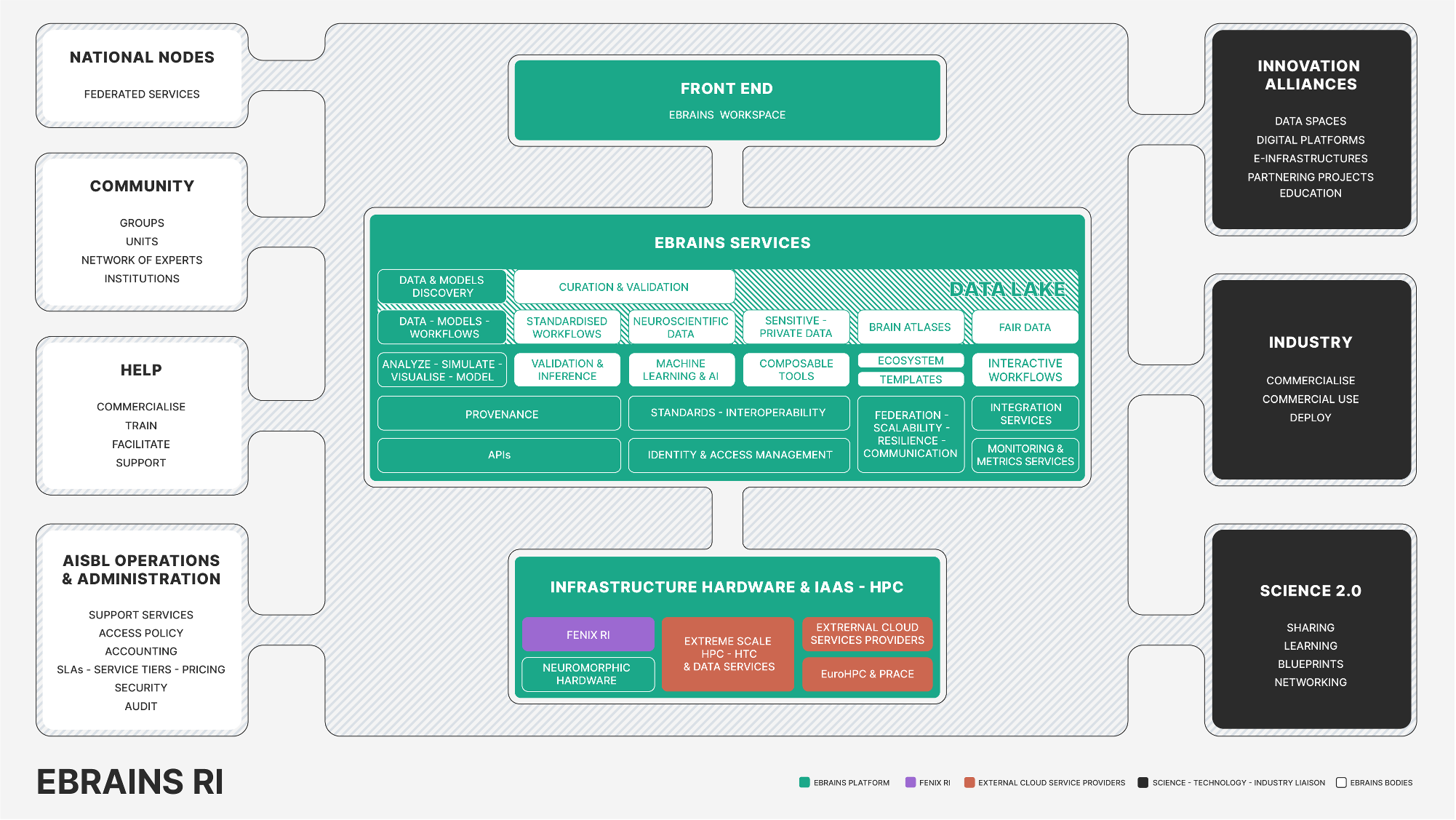

The EBRAINS RI Overview is captured in Figure 1, which showcases the EBRAINS architecture in a more abstract and engaging manner. This visualization has become an essential element in portraying the structure of EBRAINS, contrasting the more comprehensive EBRAINS High-Level Overview. It serves as an abstract representation, and for more detailed information on the entities shown, additional documentation is available.

Furthermore, the diagram highlights the prospective role of the EBRAINS RI as a pivotal coordinator within a pan-European network. This network consists of federated services provided through National Nodes (NNs). These nodes consist of EBRAINS members who autonomously offer scientific expertise, software, services, and support, tailored to the needs of their local and thematic communities. These services and facilities, accessible via the EBRAINS platform, promote the growth of the EBRAINS user base, facilitate scientific exchange, and contribute to the advancement of neuroscience.

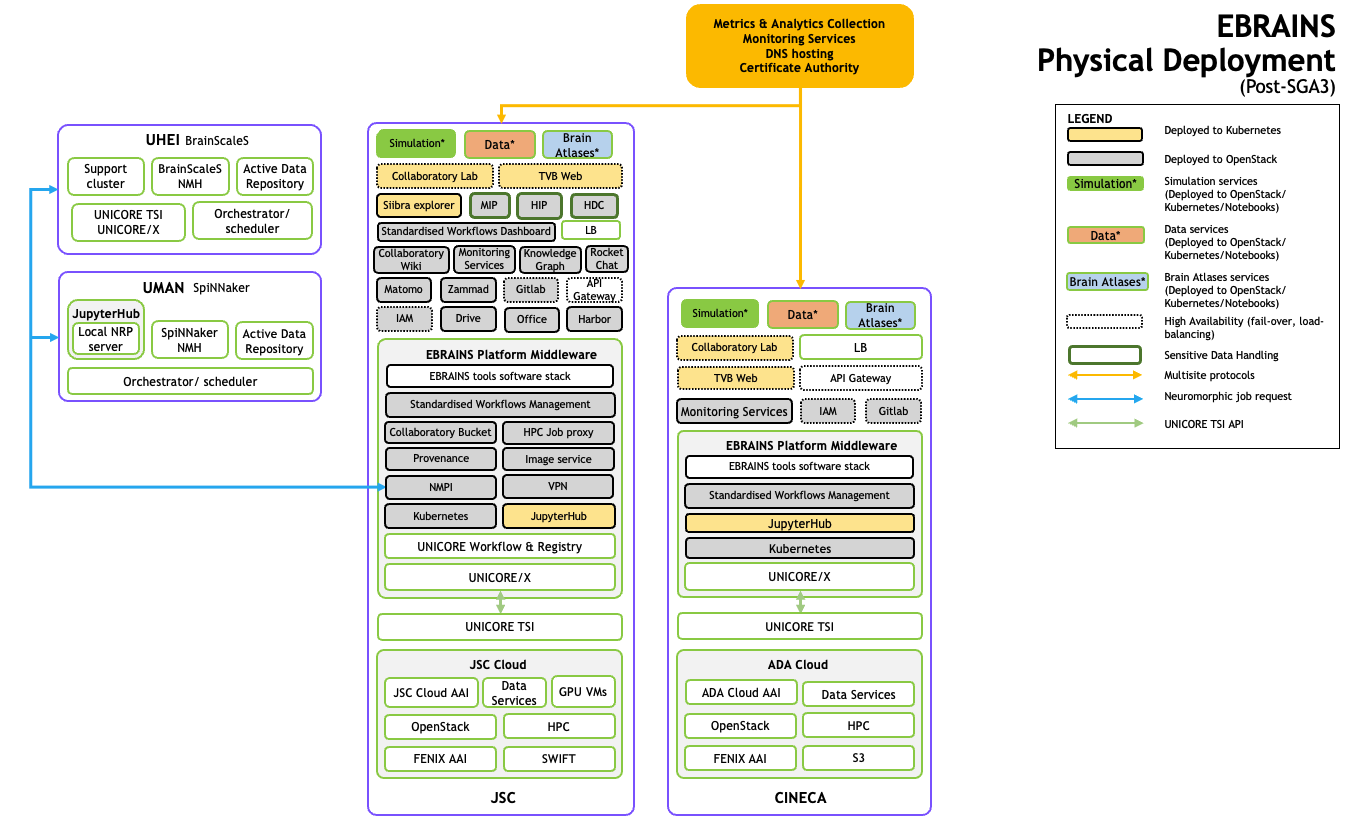

The architecture depicted in Figure 2 is a schematic of the EBRAINS Physical Deployment post-SGA3, showcasing the deployment and integration of various computing services and resources.

Key Elements

- UHEI BrainScaleS and UMAN: These two sections represent specialized clusters for neuroscience simulations and data repositories, with UMAN also including the SpiNNaker service for neuromorphic computing.

- Components: This section lists the main functional components of the architecture, such as Simulation, Data, and Brain Atlases. Each has associated services like the Collaboratory Lab and various web interfaces for data exploration and simulation.

- Common Services: Encompasses the shared resources and services that support the infrastructure, such as monitoring services, knowledge graph, and identity and access management (IAM).

- Infrastructure: Refers to the fundamental technologies that support the platform, including Kubernetes for container orchestration, JupyterHub for interactive notebooks, and UNICORE for job and data management.

- JSC and CINECA: These are two major computing centers involved in the deployment. They provide essential cloud and high-performance computing (HPC) services, including storage (SWIFT and S3) and virtual machines for GPU computations.

- Metrics & Analytics Collection: Indicates services dedicated to monitoring the performance and health of the infrastructure, DNS hosting, and security through a Certificate Authority.

Understanding the Architecture

The architecture is organized into various layers, starting with the core infrastructure services at the bottom, moving up through middleware services that manage workflows and data, and up to the user-facing components that provide simulation, data management, and visualization tools.

Each service or component within the structure is designed to be resilient, scalable, and secure, with particular attention to handling sensitive data appropriately. The use of both Kubernetes and OpenStack technologies suggests a flexible and modern approach to infrastructure as a service (IaaS), allowing EBRAINS to deploy and manage applications in a distributed environment effectively.

In essence, the architecture provides a blueprint for the integrated digital infrastructure of EBRAINS, supporting the complex needs of neuroscience research and collaboration across various European computing centers.

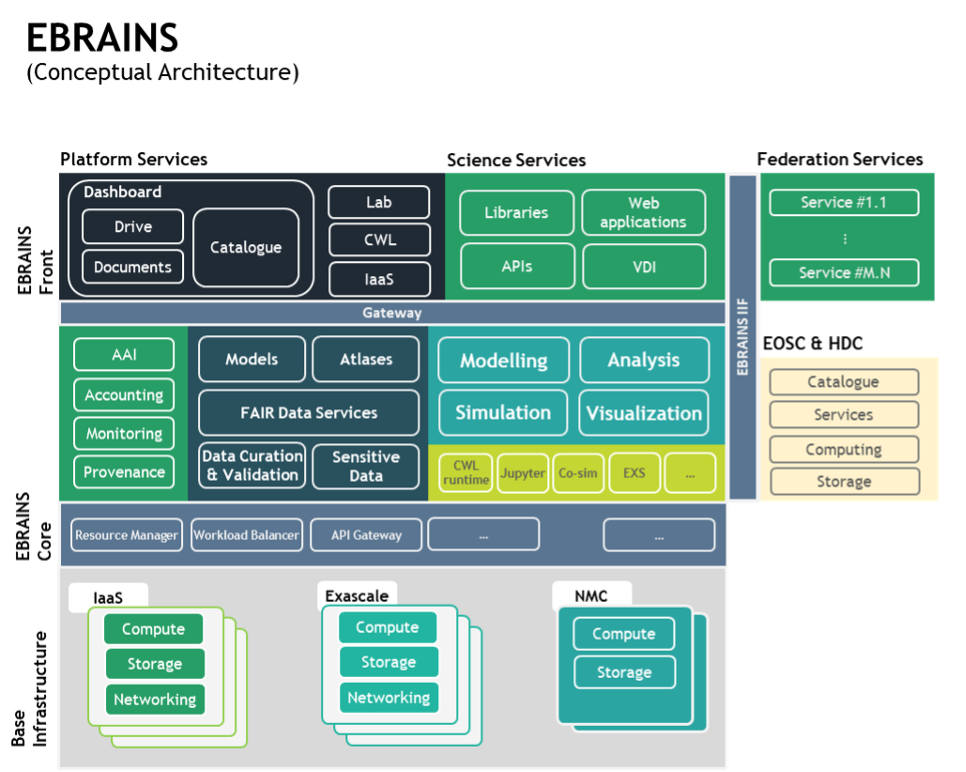

An overview of the EBRAINS conceptual architecture is presented below and is used for reference across the sub-sections that follow. In this diagram, we identify the following high-level components (Fig. 3).

EBRAINS Front-end. These comprise the “touchpoints” of the RI, i.e., the interfaces, facilities and services with which the users, e.g., neuroscientists, interact. We identify three distinct types. First, the Platform services, which integrate, encapsulate and showcase a common set of services available to all users. These are all considered as non-science services (i.e., serving scientific endeavour but are not directly science-related) enabling collaboration, file/data sharing as well as access to key runtime environments provided as a platform (Platform-as-a-Service; PaaS) for scientific output to be discovered, executed and shared. In their entirety, they adhere to open science principles and are fully aligned with the EOSC conceptual architecture. ○ Dashboard: the main entry point for all EBRAINS users, in which they can discover and manage access and tiers to all EBRAINS offerings, collaboratively organise and share assets (e.g., files, data, notebooks, workflows) into collections, manage their account details (e.g., ORCID, groups), discover and participate in thematic groups, request assistance via the Helpdesk, access documentation and best practice examples, etc.

Catalogue: showcases in an intuitive and streamlined manner the invaluable assets (e.g., models, atlases, data) via intelligent search, filtering, discovery and recommender services, enabling users to find, view, and then copy, build upon and experiment.

EFSS: the Enterprise File Sharing and Sync service, providing powerful, secure and collaborative services to users for easily uploading, sharing, managing and extracting scientific value from their assets across all EBRAINS services in a uniform manner.

IaaS acquisition: enables users to request, receive, and manage access to IaaS resources (e.g., VMs, storage, networking) showcasing the corresponding facilities of the Base Infrastructure layer (see next) to address cases where the tiered EBRAINS offerings (e.g., storage quota) are not adequate for supporting a specific scientific endeavour. Further, users can access VMs and containers with pre-configured and quality-approved EBRAINS software, further minimising setup and management effort.

Notebooks: provide access to a scalable and secure interactive compute environment based on Jupyter Lab, enabling users to edit, learn, experiment and share Jupyter notebooks across multiple tiered and standardised processing environments (e.g., kernels, GPU access). Notebooks is the first PaaS offering of EBRAINS, as it is ideally suited to providing a complete development and experimentation platform over a simple web-browser, that can tap across all base infrastructure resources of EBRAINS (i.e., from standard cloud clusters, up to HPC and NMC).

CWL (Common Workflow Language): allows users to discover, edit, experiment and invoke highly complex, reusable, interoperable, scalable and reproducible scientific processing workflows based on the CWL standard. Similar to Notebooks, it is one of the PaaS offerings of EBRAINS, but suited for even more complex scientific computations and endeavours that are by definition portable across various base infrastructure resources and communities.

Second, the Science services, comprise a collection of science-focused software, libraries and environments serving a specific and specialised scientific goal. These can be:

- Libraries and APIs, prepared and packaged by EBRAINS in quality-assured manner following our technical guidelines (see WP5) and ready to be used across notebooks and CWL workflows (hence powering the PaaS offerings), or standalone and integrated into third-party applications, systems and services building upon their core offerings. Overall, EBRAINS will provide access to more than 100 such libraries and APIs, as building blocks for its users and community members to reuse, integrate and expand.

- Web applications with their own front-end and full-stack deployment, fully integrated with all platform services to enable uniform, secure and streamlined access to data, models, atlases and services. This is the main Software-as-a-Service (SaaS) offering to EBRAINS, enabling users to access with one account (Single Sign On - SSO) feature-complete and thematically specialised scientific environments (e.g. TVB). All web applications are provided by EBRAINS, and thus they adhere to strict quality, availability and interoperability standards, rather than being managed on a delegated and best-effort basis as was the case for SGA3.

- Virtual Desktop Interfaces (VDIs) offer secure and dedicated access to desktop applications and environments over the web without requiring users to install, manage, deploy or in any way lose precious effort and time in their setup. This is the second SaaS offering of EBRAINS, with the aim of providing access to useful science desktop-only applications (i.e., a web application is not available or suitable) that require a dedicated hardware stack (e.g., attached GPUs for visualisation), or isolation and security from all other offerings (e.g., processing of sensitive data).

Third, the Federated Services of the National Nodes are available to EBRAINS users, with a clear delineation in terms of their provision details. While these services are discoverable by EBRAINS users, and they can access them via the AAI and the EBRAINS Infrastructure Interoperability Framework (IIF), the actual provision of these services falls under the scope of the National Nodes. The entire EBRAINS Front-End interacts with the EBRAINS Core via a number of API Gateways, affording increased security, standardisation, scalability and flexibility.

EBRAINS Core. This comprises a collection of horizontal enabler-services and functionality for the front-end, which can be conceptually organised into five groups depending on their nature and purpose of use. First, we identify (green box) components for accessing and monitoring access to EBRAINS (e.g., AAI, accounting). Second, we identify (dark grey box) the key information assets of EBRAINS, i.e., models, atlases, curation and sensitive data services (Trusted Research Environments/TREs). These are made available to users via the Platform and Science Services of the previous layer. Third, we identify (light green box) the foundational modelling, analysis, simulation and visualisation software exploiting the information assets of EBRAINS and making them available as capabilities to Science Services. Fourth, a collection of additional components (lighter green box) encapsulates runtime environments services the front-end Platform and Science services, such as CWL runtime, Jupyter notebooks, co-simulation framework, data proxies, etc. Fifth, a series of components (dark grey box) are positioned to mediate, manage and orchestrate between the Core and the Base infrastructure, such as the Resource Manager (managing EBRAINS allocated IaaS resources), Workload Balancer (PaaS/container orchestration framework), the internal API Gateway, etc. Finally, at this lower level we observe the integration with EOSC, Data Spaces and the HDC (see WP5 for details).

Base Infrastructure. This comprises the Cloud (IaaS), HPC/Exascale and NMC infrastructure allocated to EBRAINS and its users, which host and power all preceding layers. Further, it facilitates access and ensures technological readiness for tapping into Future Compute and National Compute Resources allocated to EBRAINS and its users. EBRAINS flexibly harnesses computing and storage resources from HPC/exascale (EuroHPC/PRACE, FENIX) and cloud providers (FENIX, commercial, private, hybrid) in a manner that ensures scalability, ease of availability, provider independence and a uniform experience for its users. The key considerations which influenced our design decisions are based on the learnings from SGA3 and are the following:

- Low cost. The total cost for procuring/purchasing, provisioning and operating base infrastructure resources should be minimised as much as possible, as it comprises a sizable cost centre, and thus risk, for the sustainable operation of EBRAINS. Where possible and relevant, we favour low-cost and commoditized resources and services, with high scalability, and exploit them to scale up/down EBRAINS (and its costs) according to user demand, service workloads, utilisation and strategic priorities. Towards this, we will explicitly use commercial EU-based cloud offerings, in instances where these are legally, ethically, and technically sound.

- Standardisation. In SGA3 of the HBP, the first version of EBRAINS and its base infrastructure layer (FENIX) developed and matured in parallel. The know-how and clarity gained in terms of EBRAINS requirements allow us to define the technical requirements from the base infrastructure in a manner that covers current and future needs in a cost-effective manner while respecting the individual roadmaps and operational capacities of the base infrastructure providers. This also enables EBRAINS to satisfy its user demands, negating the observed mismatch regarding the type/availability of computing resources.

- Vendor independence. The long-term lifecycle of EBRAINS, during which base infrastructure providers, their technology offerings and associated cost centres may change, require a high-level of flexibility in terms of where EBRAINS is deployed and the degree of efficiency of the redeployment and allocation of new base infrastructure resources. This is expressed as increased operational sovereignty and portability by minimising and/or standardising dependence on external resource providers for core internal functions (e.g., access policies, resource allocation, open/de facto standards).